How We Built AI-Powered Corporate Dossiers with Claude

The client.companies.dossier() endpoint returns a multi-section due-diligence report about any Swiss company in about 20 seconds. It's built on Claude Sonnet 4.5 via OpenRouter, grounded against roughly 40 structured signals we pull from the database before any LLM call. This post is about the engineering choices that keep it fast, accurate, and cheap enough to offer as a standard API call.

The data-first phase

Before we touch the LLM we do 9 parallel database queries:

- Company base record (name, legal form, capital, industry, auditor, canton, purpose)

- Board members with signing authorities and tenure

- SOGC publications (last 24 months)

- Registry change events (capital, board, auditor, address, status)

- Sanctions screening (SECO + OpenSanctions + FINMA)

- Auditor tenure history

- Corporate structure (parents, subsidiaries, via Zefix + GLEIF)

- Acquisition records

- News articles with sentiment scores

All nine fire concurrently via tokio::try_join! in the Rust handler. Total latency: the slowest single query (~300 ms for sanctions), not the sum.

The payoff is that by the time we call the LLM, we have a ~4 kB structured context block listing every known fact. The LLM is never asked to remember or recall — only to organise and narrate.

Prompt architecture

The system prompt is static and gives Claude three things:

- Identity and scope: "You are a Swiss corporate due-diligence analyst..."

- The data contract: exactly which fields the context block contains, with types

- Output spec: "Produce a Markdown report with sections: Overview, Key Facts, Board Composition, Recent Changes, Sanctions Screening, Risk Flags. Cite every claim using the source format [SOGC:2025-03-14] or [Zefix]."

The user message is just the filled-in context plus an instruction like "Generate a comprehensive dossier for {company.name}".

Two prompt tricks that moved quality noticeably:

Citation requirement. Asking Claude to cite sources — even in a lightweight [Zefix] / [SOGC:date] format — reduced hallucination dramatically. When the model has to attribute, it invents less.

Explicit "if absent, say so" clause. Most dossier fields are optional. Without guidance, Claude used to write "the company has strong governance" when given an empty board list. Now the prompt includes: "If a data point is not in the context, write '[not disclosed]' — never infer."

Depth tiers

Three dossier depth levels map to three max-token settings:

| Level | max_tokens | Typical output | Cost |

|---|---|---|---|

summary | 2 000 | ~800 words | ~$0.02 |

standard | 3 500 | ~1 400 words | ~$0.04 |

comprehensive | 6 000 | ~2 400 words | ~$0.08 |

The user picks via depth="comprehensive" at request time. We charge a flat number of credits per level — the credit cost maps to our expected OpenRouter spend with ~30% margin.

The fast/sophisticated split

Not every LLM call in the platform needs Sonnet 4.5. We split the workload via two environment variables:

LLM_MODEL(defaultanthropic/claude-sonnet-4.5) — dossier, comparative analysis, industry reports, timeline summaries. Calls that produce human-readable narrative.LLM_FAST_MODEL(defaultanthropic/claude-haiku-4.5) — bulk classification pipelines (industry, role), media sentiment scoring. Calls that produce structured JSON at scale.

Haiku is ~12× cheaper than Sonnet. For the ~5 000 companies/day our industry pipeline classifies, the difference is $2/day vs $24/day — small individually, large in aggregate. Sophistication matters for the dossier (flowing English, correct synthesis); it doesn't matter for "pick one of 30 industry labels".

Failure modes we care about

Upstream LLM returning 400 / 503. Our first production outage of this endpoint was a model-name mismatch — the default LLM_MODEL was pinned to a deprecated Anthropic ID, and every request returned HTTP 400: not a valid model ID. The handler now returns 503 ServiceUnavailable with a plain-English detail on any upstream error rather than a generic 500, and the SDK exposes this as a ServiceUnavailableError. Callers retry.

Context too large. If we pipe in too much SOGC history the request exceeds the model's context window. We truncate SOGC events to the most recent 50 and log when this triggers. No dossier has hit the truncation ceiling since.

Streaming vs all-at-once. Dossier responses are buffered on our side (we want the full text before storing in the dossiers table for idempotent re-fetch). We don't expose streaming to API callers today — the median response is 20 s, which is short enough that perceived latency isn't the bottleneck.

What the dossier doesn't try to do

- No browsing. The model only sees what we passed in. If you want live news, use the separate

companies.media()endpoint and combine client-side. - No predictions. We don't ask the model to forecast financial health. Multi-signal prediction is a separate algorithmic endpoint (

ai.risk_score) without an LLM on the critical path. - No opinions. The prompt is tuned to be descriptive, not evaluative. "The company increased capital by 15% in 2023" is in scope; "This suggests aggressive expansion" is not.

These are design choices, not limitations. They make the dossier predictable, auditable, and cheap.

How to try it

import vynco

client = vynco.Client()

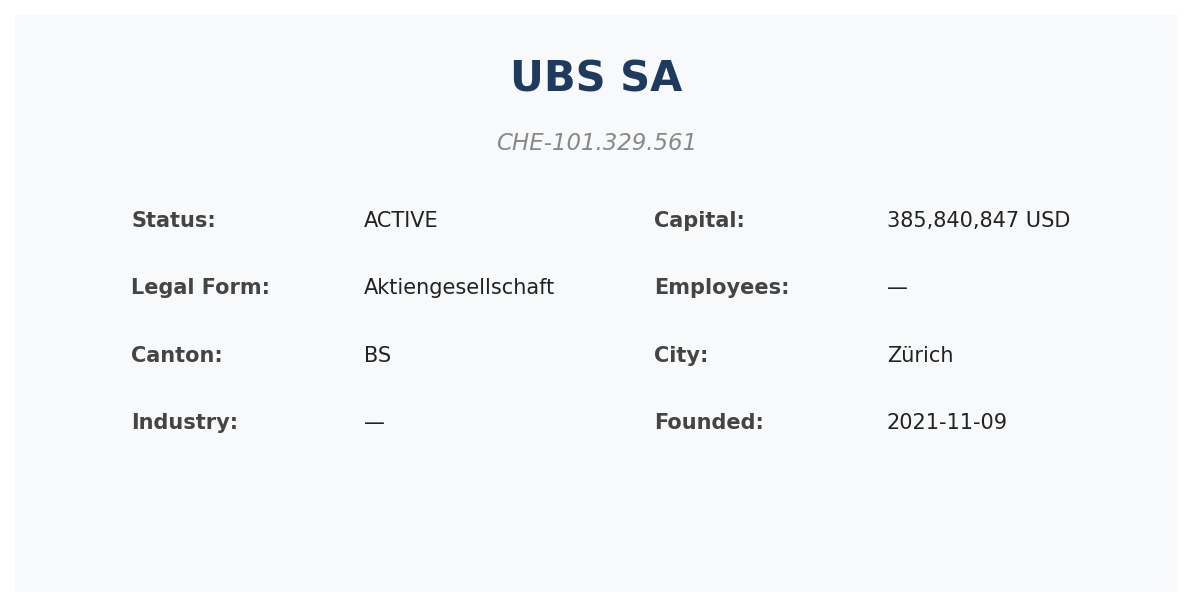

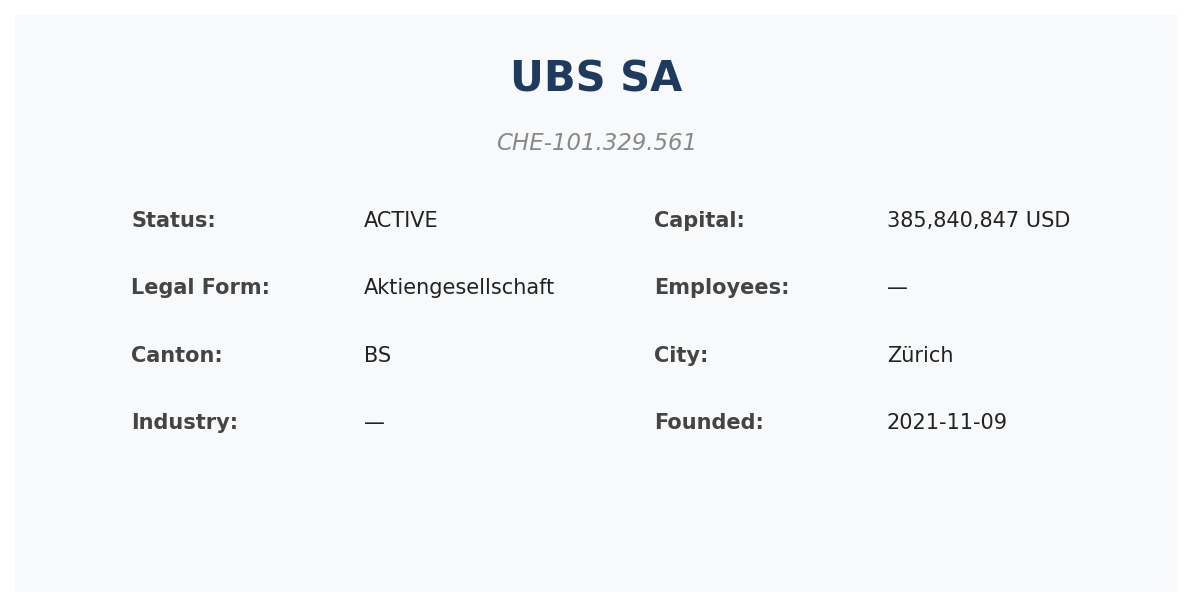

dossier = client.companies.dossier("CHE-101.329.561", depth="standard").data

print(dossier.content) # full markdown

print(dossier.sources) # list of citation sources

Or on the timeline-specific narrative:

summary = client.companies.timeline_summary("CHE-101.329.561").data

Links

- API docs: vynco.ch/docs/dossiers

- SDK: github.com/VynCorp/vc-python

- Pricing: vynco.ch/pricing